Apify has many differences compared to regular web scraping APIs. It functions more like a generic cloud platform that allows developers to run random Docker containers (which they call "actors"), with web scraping being one of its primary use cases and core features. Consequently, they offer a proxy network, and their proxies are seamlessly integrated into third-party developers' custom scrapers. Here's a quick real-world example: a third-party developer decided to build an actor to scrape TikTok. They built the Docker image of the actor and published it to the Apify marketplace: https://apify.com/clockworks/tiktok-scraper. Now, you can use this actor to scrape TikTok, and essentially pay for three things:

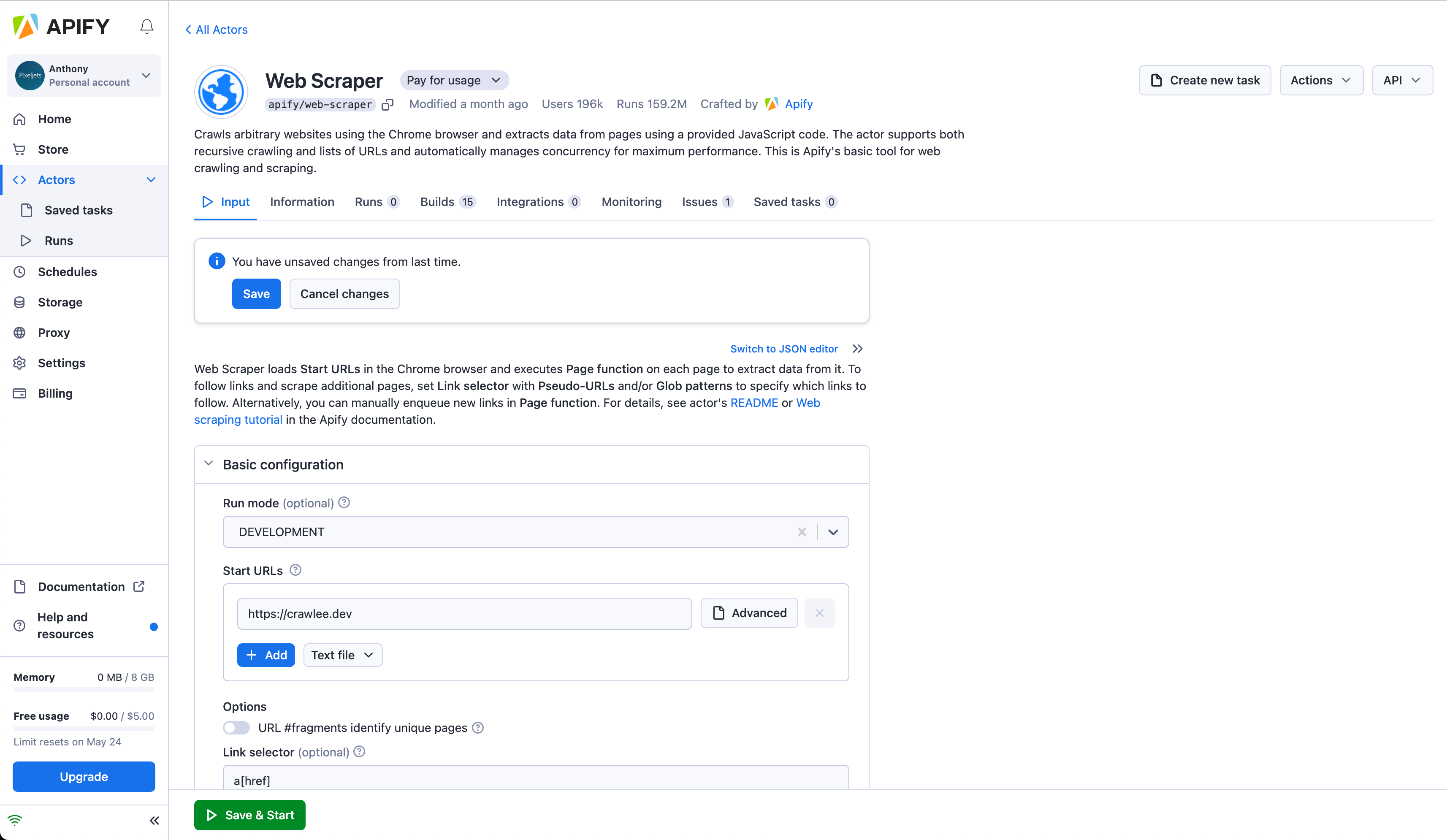

The actor's usage Apify's infrastructure usage (CPU, RAM, bandwidth) Apify's proxy network usage You can imagine that this becomes complicated in terms of pricing. Although Apify has a free plan for their infrastructure usage, you cannot really scrape TikTok with this plan because you need to pay USD 45 per month for the TikTok actor. Each actor has its own pricing, and you pay for every actor you subscribe to, monthly. This is why I decided to mark Apify as "not free" in the table above because once you decide to scrape some real-world website, it's not truly free, nor is it cheap. I should note that basic scraping actors, such as https://apify.com/apify/web-scraper, are free, so you only pay for infrastructure and proxy usage here.

Simultaneously, I appreciate the concept of the Apify ecosystem. The really challenging aspect of running web scraping (if it's not a one-off task) is that each scraper requires significant maintenance, which quickly becomes time-consuming and burdensome. Thus, Apify allows the outsourcing of this maintenance to third-party developers, who are incentivized because Apify shares a portion of the profit with them. End customers simply use these scrapers, and the developers will maintain them. Apify's own development team also maintains many scrapers that you can use.

Although it boasts numerous features and integrations, it is not as user-friendly as other web scraping APIs because it introduces many new concepts, and generally, it represents a non-realtime approach (as each scraper is typically a Docker image that is run on demand and can crawl many pages, while storing the results into Apify storage for later download).

However, this is also Apify's strength: third-party developers create and publish their own scrapers tailored to specific websites, which you can use as building blocks for your own scrapers.

However, this is also Apify's strength: third-party developers create and publish their own scrapers tailored to specific websites, which you can use as building blocks for your own scrapers.

I should also note that Apify maintains Crawlee, a popular open source Node.js web scraping package, which is often used as a foundation for web scraping actors.